Building an Agentic AI System to Extract Specific Information from 1000+ Company Websites

In 2026, the landscape of data acquisition has fundamentally transformed. The days when gathering structured information about thousands of disparate companies—such as CEO full names, precise operational industries, headquarters locations, estimated annual revenues, and direct contact emails—relied on tedious manual curation or fragile, rule-based web scraping techniques are long gone.

As businesses increasingly prioritize speed and data fidelity, we have evolved past the traditional constraints of data collection. I have recently constructed and deployed an Agentic AI system that is profoundly simple yet extraordinarily powerful. This architecture allows developers to autonomously search, intelligently collect, and precisely extract targeted data fields from massive sets of company websites. Crucially, this is achieved without writing a single fixed CSS or XPath selector for any individual page.

By treating the web as an unstructured knowledge graph rather than a collection of fixed HTML documents, this system uses advanced Large Language Models (LLMs) and specialized scraping frameworks to essentially “read” websites like a highly trained human analyst.

The Traditional Problem: Breaking the Mold of Legacy Web Scraping

Before we dive into the agentic solution, we must address why the old paradigm no longer scales in today’s dynamic web ecosystem.

For the past decade, engineers relied mainly on DOM parsing libraries and headless browsers, instructing their code to target specific elements using HTML classes or XPath queries. However, this approach introduces several massive bottlenecks when attempting to extract niche data from thousands of unique corporate domains:

- Unpredictable and Fragile Structures: Every company builds their website differently. A class name that works perfectly for identifying the “About Us” section on one site might be entirely absent or obfuscated by modern CSS frameworks (like Tailwind or styled-components) on another site. When a company redesigns their landing page, traditional scrapers physically break, throwing null reference errors and halting pipelines.

- Heavy JavaScript Rendering Requirements: Many contemporary corporate sites are Single Page Applications (SPAs) built on React, Vue, or Angular. They load substantial content asynchronously. To scrape these sites effectively, developers are forced to utilize full browser automation, which demands intense compute resources and memory overhead. Managing fleets of headless Chrome instances is notoriously difficult.

- The Accuracy vs. Volume Trade-off: When relying on non-agentic methods, you typically face a dilemma. You must either write thousands of bespoke scraping routines tailored specifically for each domain, or you resort to dumping the entire raw HTML payload of every site into a data lake to be parsed later. Neither is optimal. Dumping raw HTML bloats storage with redundant navigation elements, tracking scripts, and styling metadata.

- Scaling Frictions: When we scale operations to encompass 1000+ corporate websites, the sheer administrative overhead of maintaining logic becomes paralyzing. It leads to easily triggered anti-bot mechanisms, frequent blockages, and an agonizingly slow feedback loop. Conversely, migrating to commercial data extraction services can result in exorbitant operational costs.

The Solution: A Resilient Agentic AI Pipeline

To combat the inherent fragility of legacy scraping, I transitioned strictly to an Agentic AI pipeline. An agentic system alters the fundamental relationship between code and target: instead of telling the system exactly how to locate data step-by-step, we declare what our objective is. The AI planner determines the strategy, manages page transitions, navigates DOM variations, and extracts the target attributes autonomously.

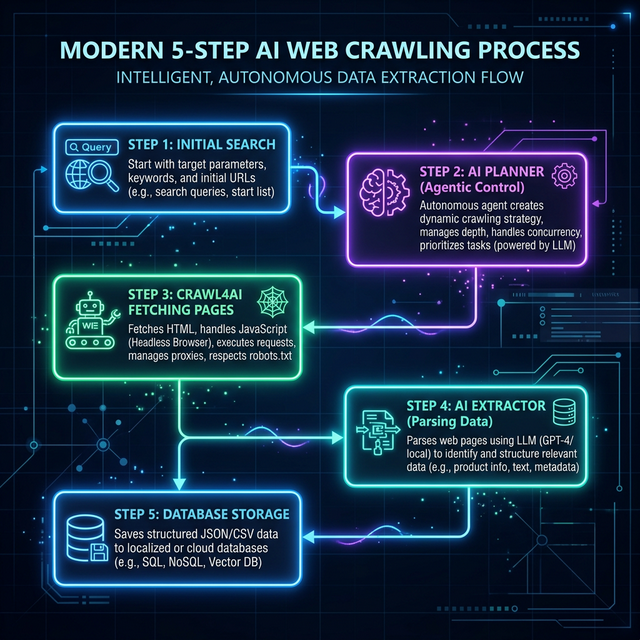

The architecture operates seamlessly across five modularized steps, combining open-source scraping engines with the semantic understanding capabilities of modern LLMs.

Step 1: Discovery and Intelligent Domain Collection

Before you can extract corporate data, you need the correct, authoritative source. Many legacy systems rely on statically purchased domain lists which often contain outdated links, abandoned side-projects, or incorrect regional variations.

Instead, our system utilizes SerpAPI (Google Search API integration) to perform dynamic, real-time discovery. The AI constructs search queries logically, such as “[Company Name] official corporate website”.

After scanning the top search engine results, the system does not simply take the first link blindly. Instead, an intermediary evaluation step (often a lightweight local LLM or heuristic check) verifies the credibility of the primary links, immediately distinguishing genuine brand homepages from Wikipedia articles, LinkedIn directory pages, or third-party aggregators. This establishes a high-confidence foundation for the crawl.

Step 2: Extraction Planning (The Agentic Planner)

Once we have verified target URLs, the Agentic Planner is engaged. Instead of pre-formatting rigid code, we supply the planner with natural language goals: Provide the full name of the CEO, the core industry focus, the city where the headquarters is located, any listed estimated annual revenue figures, and primary contact emails.

The Planner model interprets these goals and dynamically constructs an extraction blueprint. It decides which pages on the domain are most likely to house this data—often realizing that “About Us”, “Leadership”, “Investor Relations”, or “Contact” pages are far more valuable than the core index page. It then yields a precise, structured JSON schema mapping to our requirements. This adaptive planning ensures our system behaves with human-like intuition across thousands of unstandardized layouts.

Step 3: Mass Web Crawling Infrastructure

With a plan in place, the system dispatches extraction tasks to an open-source marvel optimized expressly for LLM workflows: Crawl4AI.

Crawl4AI acts as the heavy-lifting engine. Unlike basic request libraries, it is built to interface directly with agentic ecosystems. The crawler natively handles complex JavaScript rendering, efficiently executing asynchronous requests through robust headless browser capabilities. Given the scale of 1000+ websites, Crawl4AI supports high-concurrency requests combined with sensible rate limiters, ensuring that target servers are not overwhelmed while remaining incredibly fast.

Crucially, Crawl4AI performs an aggressive sanitization process on the retrieved content. It intelligently strips away navigation banners, footers, advertisements, cookie popups, and embedded tracking pixels. The remaining core content is converted from noisy HTML into clean, deeply semantic Markdown. This Markdown conversion is paramount—it dramatically reduces token bloat before the data hits the LLM, preserving crucial context windows and significantly driving down tokenized inference costs. The system also natively respects robots.txt instructions and incorporates exponential retry logic for ephemeral network failures.

Step 4: Structured Data Extraction via AI

This phase represents the cognitive leap over traditional scraping. Once Crawl4AI serves the pristine Markdown, the AI Extractor Agent steps in.

Because the data is now presented as clean textual context, the Extractor relies purely on its semantic reasoning. It does not look for a div with the class ceo-name; instead, it understands the English-language context surrounding personnel announcements or leadership directories. Even if the website designer hides the CEO’s name inside an obscure image alt tag or an unordered list, the AI Extractor confidently isolates the entity.

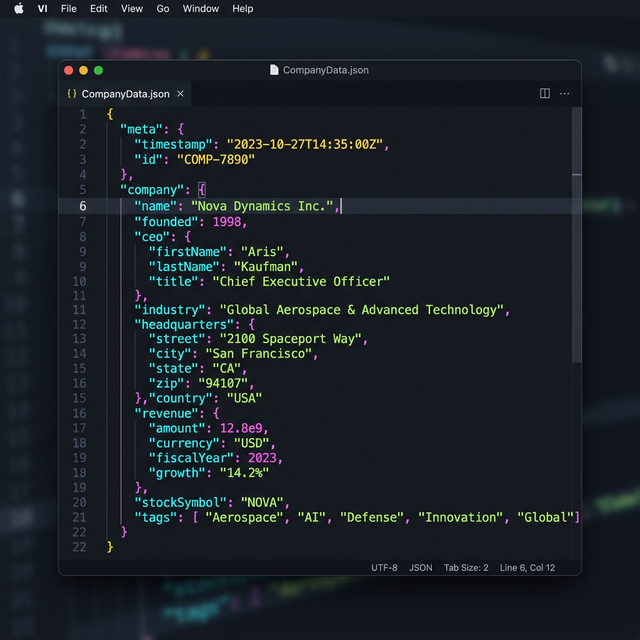

The agent matches this discovered information against the strict JSON schema defined by the Planner in Step 2. It formats revenues uniformly, standardizes location syntax, and parses missing fields cleanly without throwing catastrophic errors. The resulting output is impeccably structured, error-free JSON data.

Step 5: Finalization, Storage, and Utilization

With the raw text successfully converted into clean, validated JSON schemas, the final step writes the outputs to structured environments—whether that is a batch of cohesive CSV files, an SQL database, or embedded into vector stores.

Having access to beautifully structured data from unstructured corporate frontiers equips businesses with an operational superpower. This dataset immediately accelerates high-tier lead generation efforts, enables granular market research, fortifies competitive analysis platforms, and automatically feeds updated profiles directly into enterprise CRM ecosystems.

Key Benefits of the Agentic Approach

Embracing this automated, dynamic paradigm delivers massive technological dividends for engineering teams and data researchers alike.

- Specific and Flexible: Because the LLM inherently comprehends semantic meaning, it accurately extracts correct data fields regardless of sweeping DOM changes or front-end redesigns. The structural variability of target websites ceases to be a liability.

- Hyperscalable operations: By integrating asynchronous crawling via Crawl4AI and leveraging cost-efficient open-source LLMs where appropriate, you can process thousands of sites for a fraction of the cost associated with massive legacy cloud operations.

- Agentic Extensibility: The modularity of this system makes it trivial to expand. You can instantly plug in a “Validator Agent” to double-check AI hallucinations, or an “Enrichment Agent” to aggregate the newly extracted business data with external public financial registers for a more comprehensive dataset.

- Zero Maintenance of Selectors: This is perhaps the greatest triumph. The perpetual overhead of updating XPath and CSS selectors as websites evolve is eliminated, driving technical maintenance costs closer to zero.

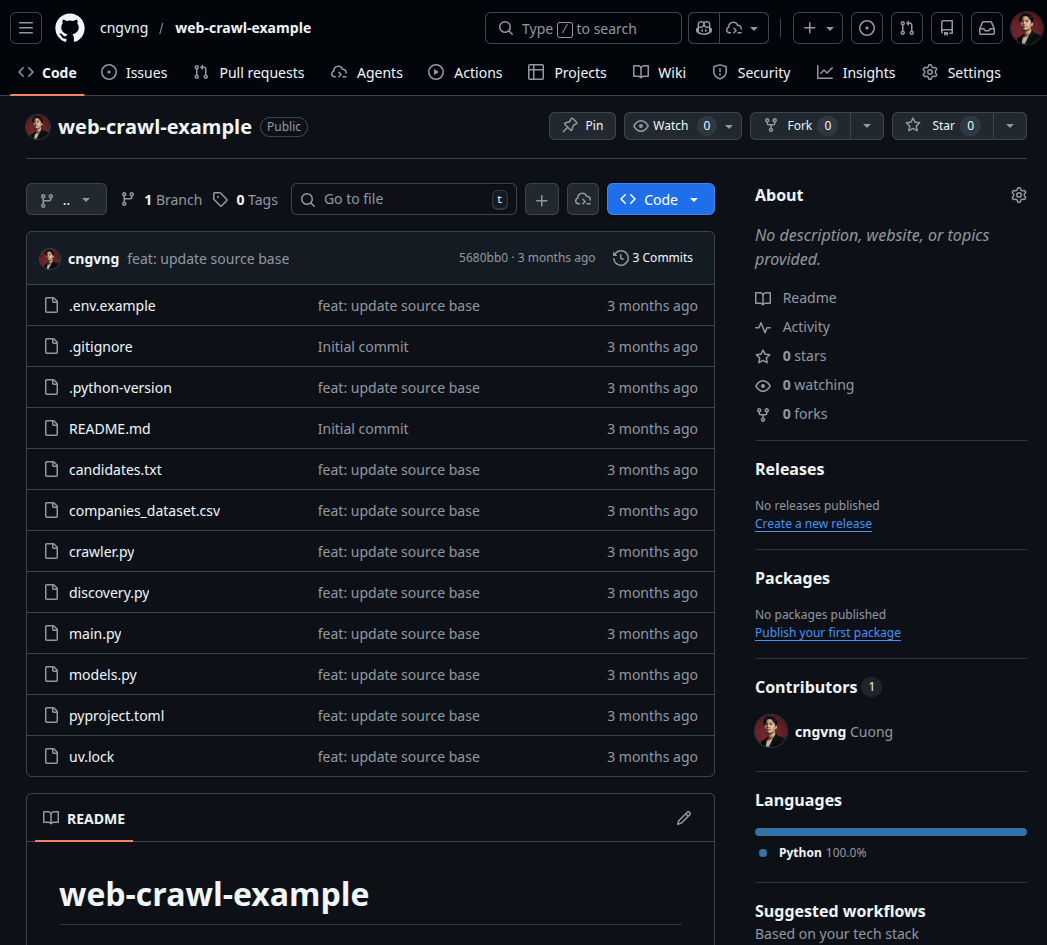

Where to See the Code in Detail

We believe strongly in the democratization of these agentic tools. While this article focuses on the overarching architectural strategy and logical flow, the complete underlying deployment code is fully open-sourced.

The expansive repository details the entire inner workings of the pipeline. You will find comprehensive configurations detailing how we bind SerpAPI to the discovery planner, the exact initial context prompts designed for the specialized Extractor and Planner LLMs, advanced configurations for managing JavaScript timeouts in Crawl4AI, and battle-tested logic for large-scale parallelization and graceful error handling.

You can inspect all the components, fork the project, and contribute here: https://github.com/cngvng/web-crawl-example.git

Feel free to clone the repository, inject your own API access keys, configure the proxy servers if needed, and run a test iteration against your custom defined target list of corporations.

Conclusion & Call to Action

Agentic crawling isn’t just an iterative improvement; it is actively rewriting the fundamental rules of web-scale data collection. When we look at the immediate horizon, the integration of intelligent scraping pipelines alongside burgeoning protocols like A2A (Agent2Agent) and MCP (Model Context Protocol) opens up extraordinary new frontiers.

In the very near future, crawling systems like this will not operate in isolation. They will seamlessly federate with global networks of agents, becoming localized nodes that perpetually research, negotiate, and update enterprise knowledge layers autonomously.

If you are currently architecting data ingestion pipelines, researching AI integration within traditional SaaS, or experimenting with multi-agent orchestration, right now is the optimal time to embrace Crawl4AI and the agentic model.

We are moving away from telling code how to crawl, toward telling intelligent agents what to investigate.

Have you tested agentic pipelines for massive data extraction, or experienced the fragility of traditional scrapers firsthand in your recent projects? Share your insights, challenges, and perspectives in the discussion below!